Siyuan Liang

Long-context modeling & recurrent architectures

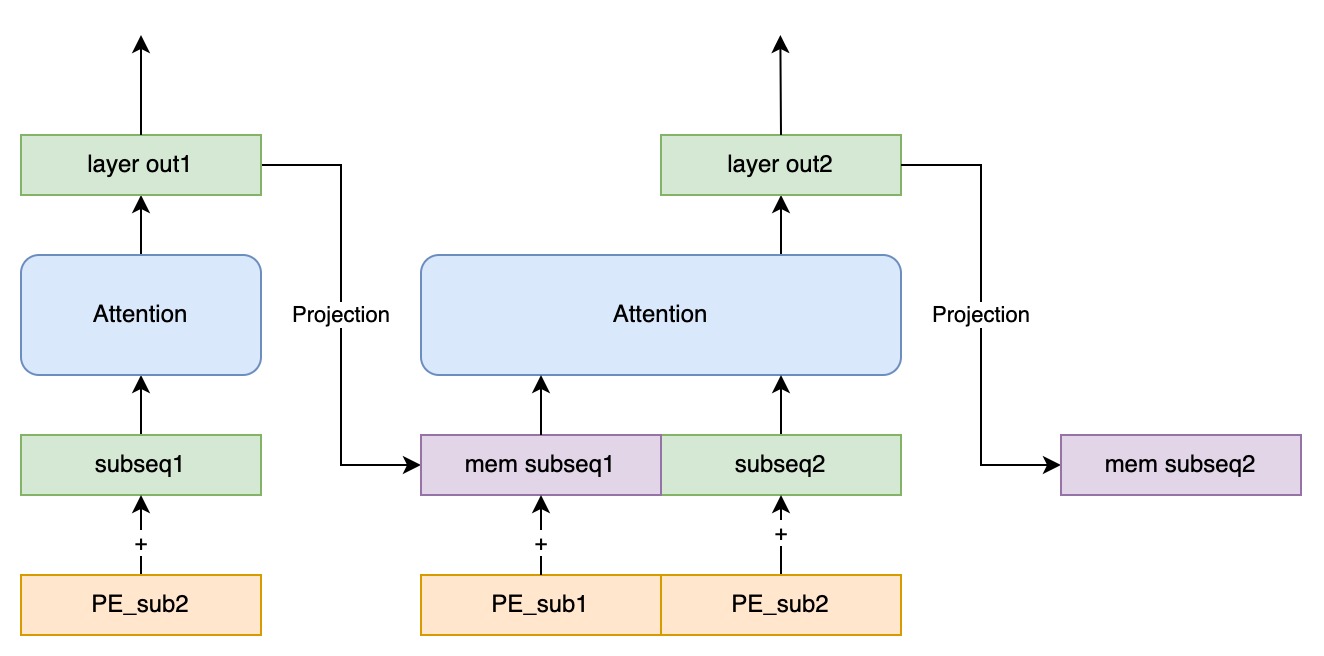

Siyuan Liang (梁思远) focuses on long-context modeling and recurrent architectures for sequence models, and proposed the Truncated Recurrent Transformer for strong length extrapolation under train-short, test-long settings.

Previously, he worked as an algorithm researcher at Megvii in Beijing, delivering production algorithms for fingerprint and face liveness, display demura, and XR hand tracking.

He received his M.S. in Electronic and Communication Engineering from Xidian University, where his research centered on deep-learning-based radar anti-jamming detection and intelligent electromagnetic games.

llama2RNN.c — Truncated Recurrent Transformer implementations in C

LEDiT — PyTorch Implementation, NeurIPS 2025

SimpleDG — Training and test code for ECCV2022 workshop NICO challenge

latest posts

| Jun 01, 2019 | 世界模型(二):智能电磁博弈 |

|---|---|

| Jun 01, 2019 | World Models (II): Intelligent Electromagnetic Game |

| Mar 31, 2019 | 世界模型(一):记忆、感知、预测、评估、决策的联合 |